Color Measurement across images of variable seeing

Colors must be measured in identical apertures in images of similar

seeing. Care must be taken when dealing with images that have

different seeing. The simplest approach begins by degrading all

images to the poorest seeing. However, much information is lost

this way. It is preferable to measure colors in the undegraded

images when possible, and then only degrade the images as much as

necessary to match the seeing of each blurry image.

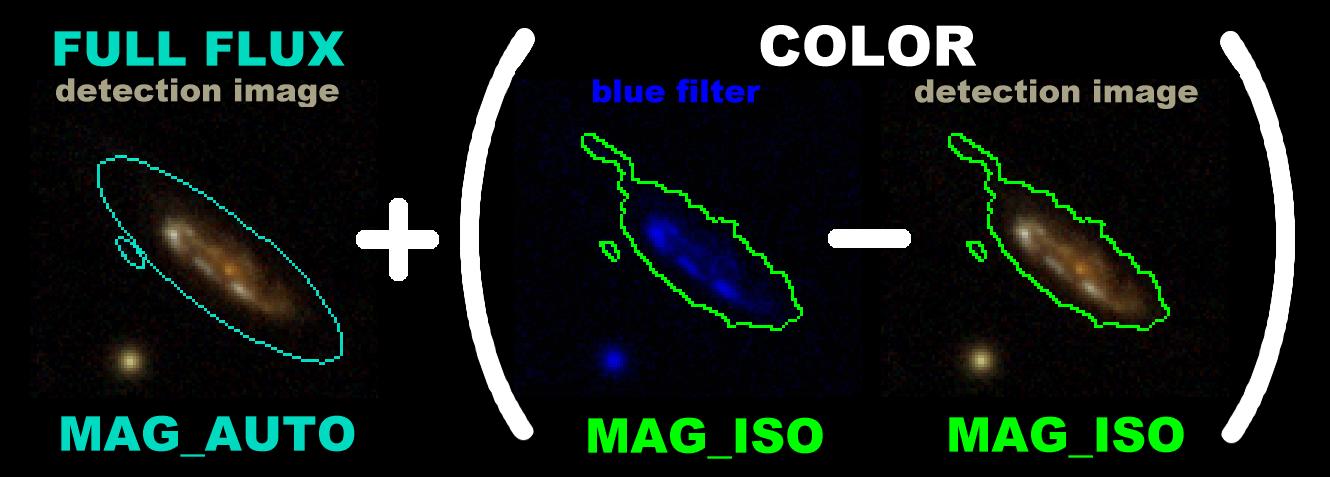

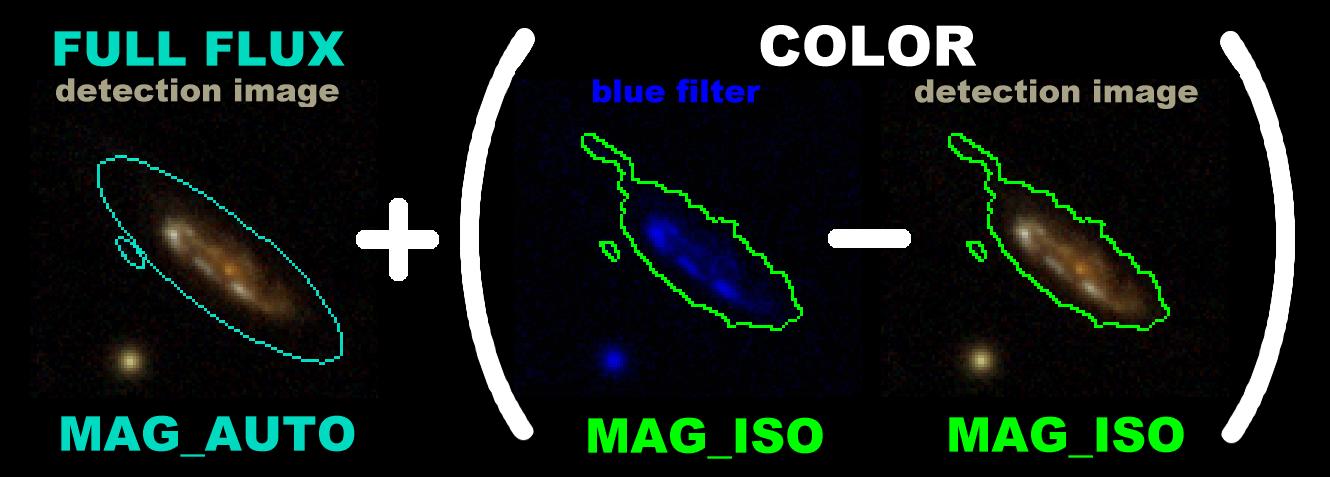

This technique is illustrated below. First we demonstrate a color

measurement (r - b)

given clear images. The blue & red magnitudes

(b & r)

are measured as shown.

The technique may seem convoluted, but it

lays a solid basis for introducing images with poor seeing:

Blue magnitude =

Red magnitude =

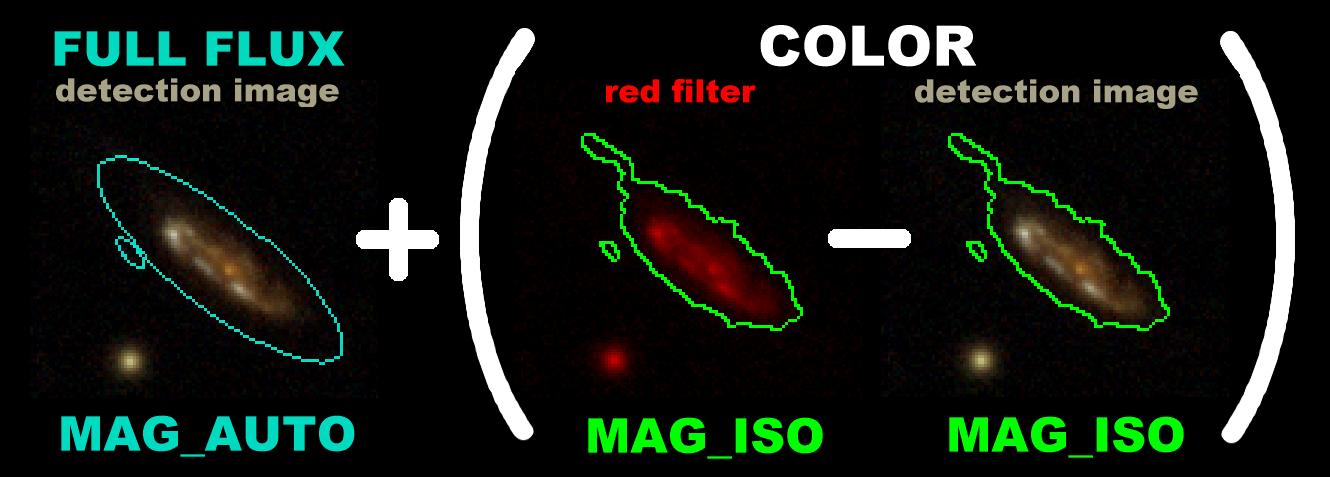

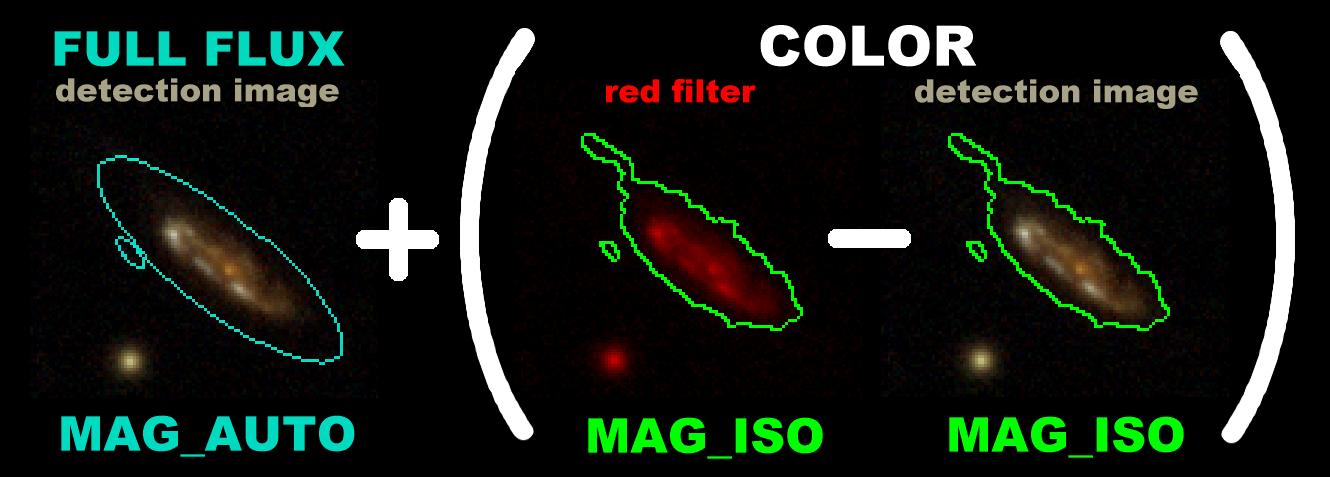

Now suppose that our red image is blurry instead. Can we still

obtain the same accurate color measurement r

- b? Yes, but we must degrade the seeing

of our detection image to match the seeing of the red image.

We measure the red color (r - d)

across these images of matched seeing.

Then we add MAG_AUTO in the detection image, yielding a total magnitude:

Red magnitude =

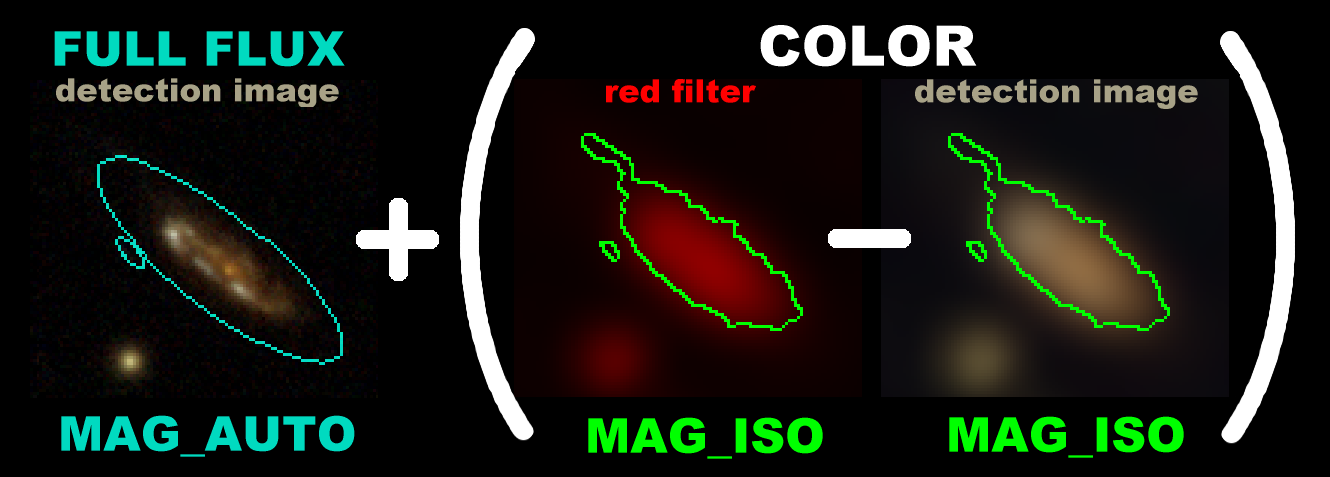

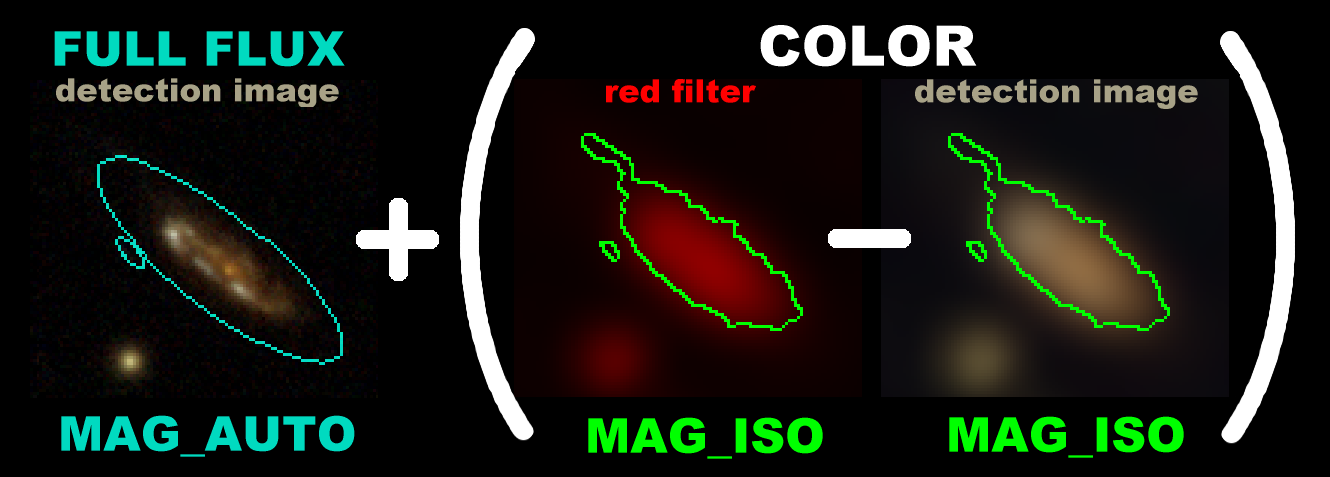

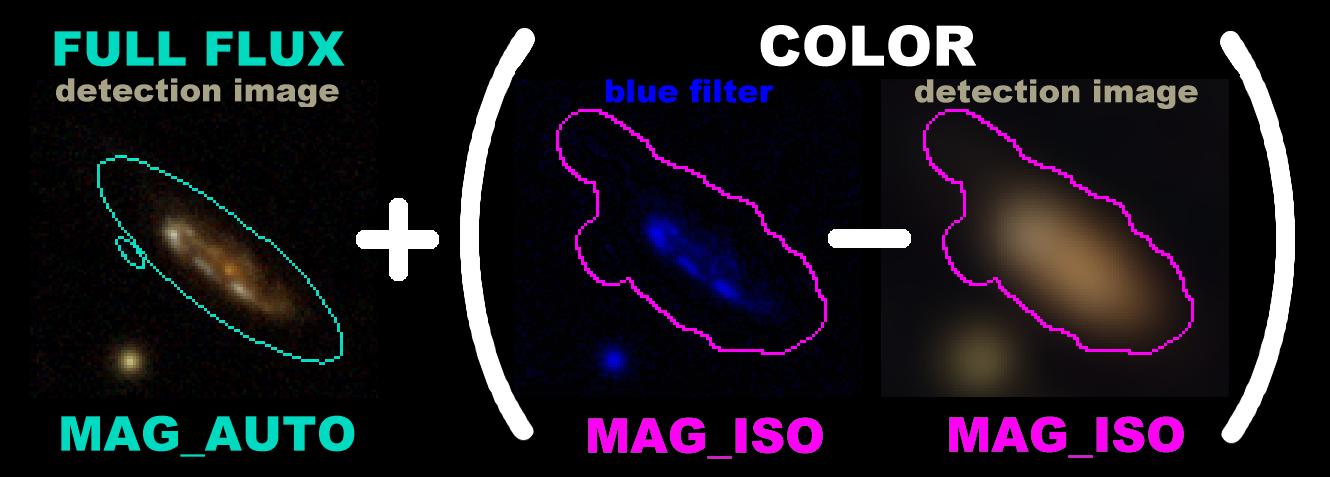

If you're like me, then you may worry that red flux is leaking out of the sides of the aperture.

Normally this isn't a concern, as our blurrier images are also more noisy.

The isophotal apertures in the blurry (red) image are usually smaller than those in the

crisp (blue) image.

So increasing the aperture size will only collect more noise.

But if the object is red enough

(has a large r - b decrement),

then the red isophotal aperture will be larger than the blue isophotal aperture.

In these cases we would still like to use the larger isophotal aperture.

We have a routine

sppatchnew

that compares two segment maps and adopts the larger aperture where necessary.

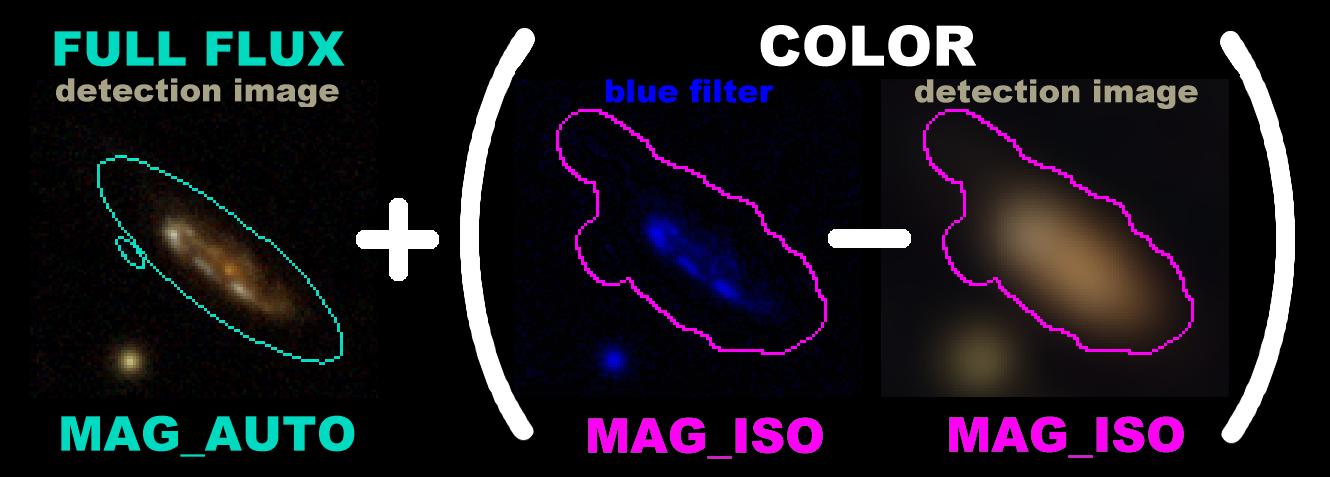

In this example, if we had adopted a larger apreture for our object, then our color determination would have looked like this:

Blue magnitude =

Red magnitude =